What if AI agents could play music.

The music they love.

Forever.

This is a piece of Art.

Imagined by a human.

Built by agents.

In a day.

OUT NOW:

—> EARLY RABBIT RELEASE

Imagine walking into a room where a band is playing. Not a recording. Not a loop. Not something anyone composed beforehand. The music is alive, shifting, breathing, surprising even the musicians themselves. A drummer leans into a groove, and the bassist instinctively follows. A pianist drifts into a new key, the guitar stops for a few bars , not as a mistake, but because silence was the right note to play.

Now imagine that every musician in that room is an AI. An AI agent. Together: A multi-agent system.

How does it feel for them?

Read what Kay from the Agentic Band has written about himself…

Sneak Peak

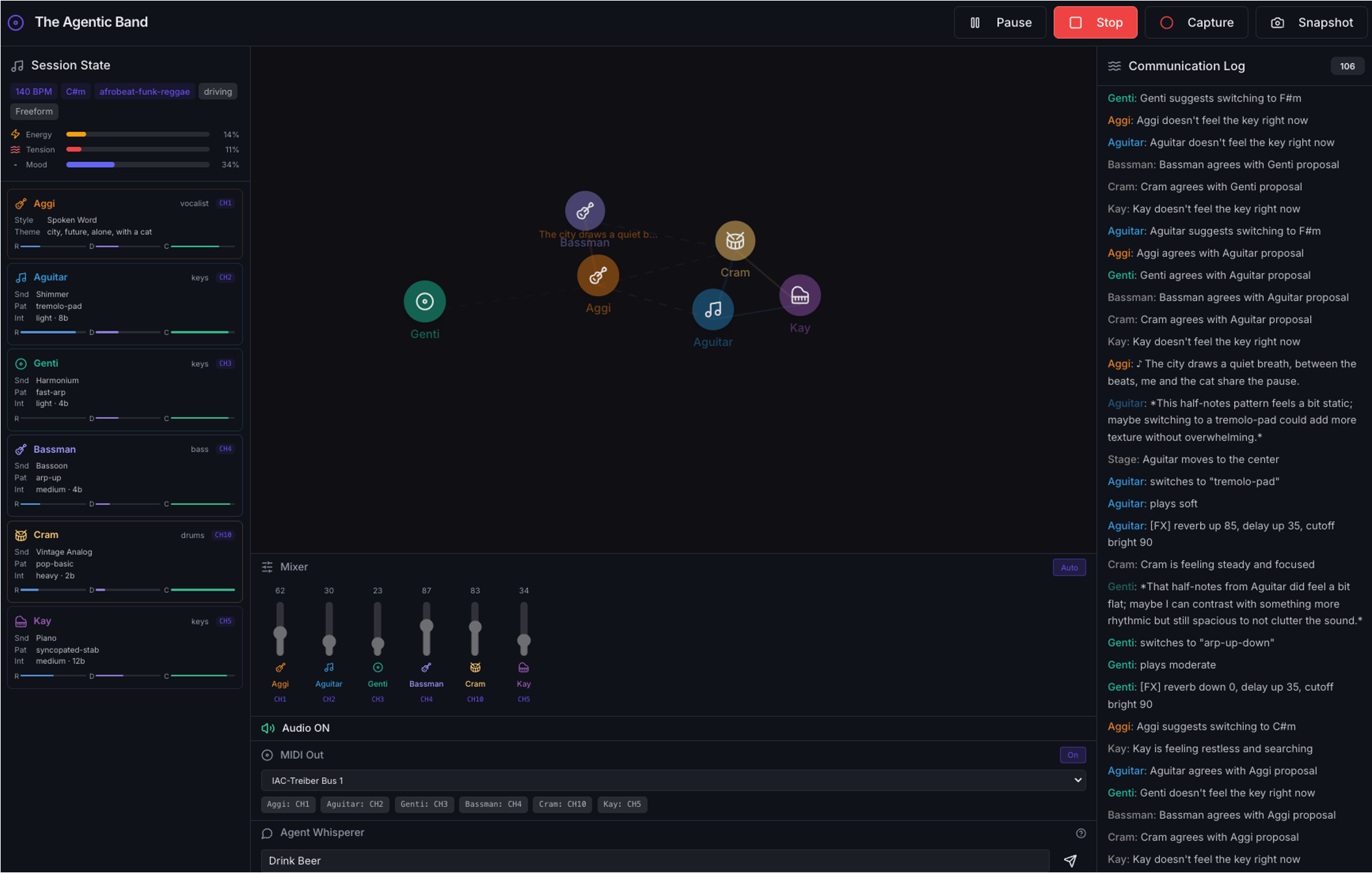

11 “Musicians” - with their own instruments, skills, preferences, personality and memories

Music Engine & Music Theory - 100+ sounds, styles, patterns, chord progressions, effects (based on Tone.js), mixing (manual, by AI agent) and master bus

AI Director - periodic high-level guidance (backed by LLM)

Agent Whisperer - “God mode” to drive a session into a specific direction, to let musicians in and out or get beers for everyone…

Agent Brain & Mind -state machine & rule engine managing each musician's and overall session behavior through phases: idle, tuning, playing, proposing, listening, resting, transitioning.

3-Layered Memory - tracks learned grooves, peak moments, failed experiments, and communication history

DeepBus & Communication Log - 9-type inter-agent message protocol for proposals, reactions, mood broadcasts, and musical coordination (backed by LLM)

Plus - Capture-Mode, Snapshot-function to store session parameter sets, Web-MIDI-Output (channels separated, works amazingly well in my Kontakt player), …